Imagine seeing a video of a world leader announcing a major policy change, or a clip of yourself saying something you never uttered. In the digital age, seeing is no longer believing. This is the reality of deepfakes, a rapidly evolving technology that uses artificial intelligence (AI) to create hyper-realistic but entirely fabricated media. While the technology itself is a marvel of engineering, its potential for misuse is alarming, turning “fake news” into a visceral, high-fidelity experience. Let’s take a look at deepfakes and what is being done to stop them.

The Reality of Deepfakes

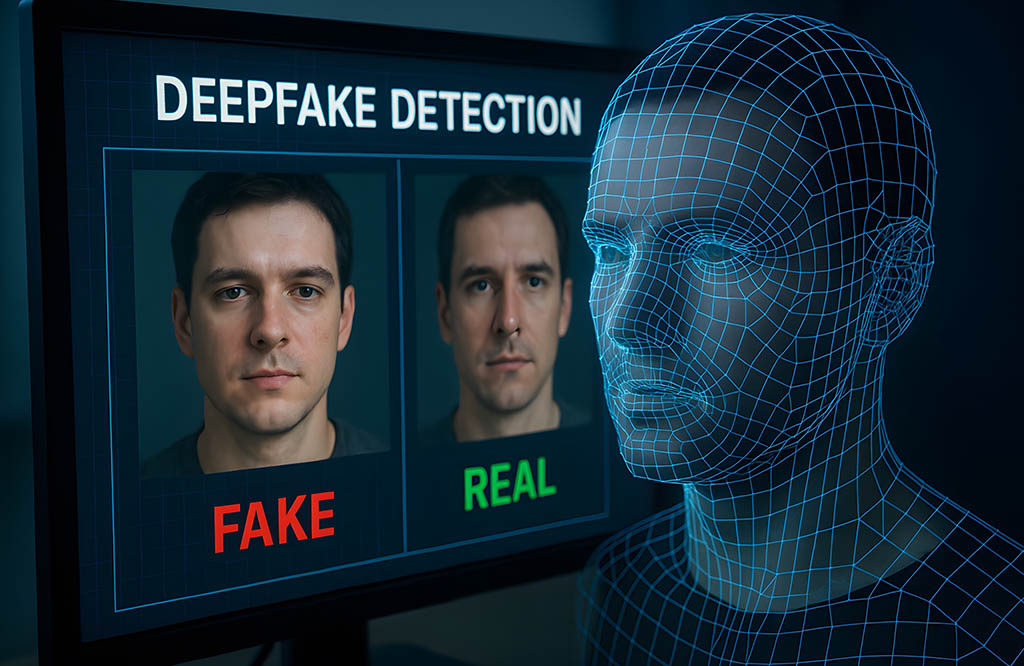

The term deepfake is a portmanteau of deep learning and fake; it refers to media; most commonly video and audio; manipulated using advanced machine learning, specifically Generative Adversarial Networks.

In a GAN, two AI models are pitted against each other: the Generator, which creates the fake content, and the Discriminator, which tries to detect the fabrication. Through millions of iterations, the generator learns to produce increasingly convincing results to fool the discriminator; eventually, the output becomes so polished that it is nearly indistinguishable from reality to the human eye or ear.

Malicious Applications and Social Impact

While the creative potential for deepfakes in cinema and gaming is vast, the current landscape is dominated by more malicious applications. Deepfakes can be weaponized to create fabricated videos of politicians making inflammatory statements; during sensitive periods like elections, a well-timed leak of a fake video can incite social unrest before the truth can catch up.

We are also seeing a rise in phishing where AI mimics a CEO’s or family member’s voice to authorize fraudulent wire transfers. In 2019, a UK energy firm was scammed out of $243,000 when an employee believed he was speaking to his boss. One of the most insidious uses is the creation of non-consensual synthetic imagery; this is often used to silence or humiliate women, journalists, and activists, causing profound psychological and reputational harm. Furthermore, the existence of deepfakes allows for the Liar’s Dividend, where people claim that real incriminating evidence is actually a fake, creating a post-truth environment where accountability becomes nearly impossible.

Technical and Legal Defenses

The defense against synthetic media is a multi-layered arms race involving technology, law, and education. Researchers are developing AI detection algorithms that look for biological tells that AI still struggles with; such as irregular pulse rates visible in skin tone changes, inconsistent eye-blinking patterns, or unnatural facial muscle movements.

Initiatives like the Coalition for Content Provenance and Authenticity aim to use digital watermarking and blockchain to create a nutrition label for digital media; by using cryptographic metadata, a file can carry a verified history of its origin and any edits made along the way. Legal frameworks are also evolving, with the EU AI Act and other global legislation requiring high-risk AI systems to be transparent. Laws are being updated to categorize the creation of non-consensual deepfakes as a specific criminal offense, moving beyond traditional defamation or copyright laws.

Cultivating Digital Vigilance

On a personal and corporate level, media literacy and vigilance are essential. We must treat digital content with skepticism and verify shocking videos through multiple legacy news outlets. Organizations should adopt zero-trust communication; if an executive makes a high-stakes request via video or audio, it should be verified through a secondary, out-of-band channel. The fight against deepfakes is not a one-and-done victory; it is a continuous evolution.

As the barrier to entry for creating these fakes drops; now requiring only a few photos and a standard laptop; the responsibility falls on all of us to be more discerning consumers of digital media. The goal is not just to spot the fake, but to rebuild a foundation of digital trust.

For more great technology-related information, visit the blog on our website.